【有奖征文】EdgeX + OpenVINO 实现边缘智能 AI 应用

openlab_96bf3613

更新于 1年前

openlab_96bf3613

更新于 1年前

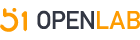

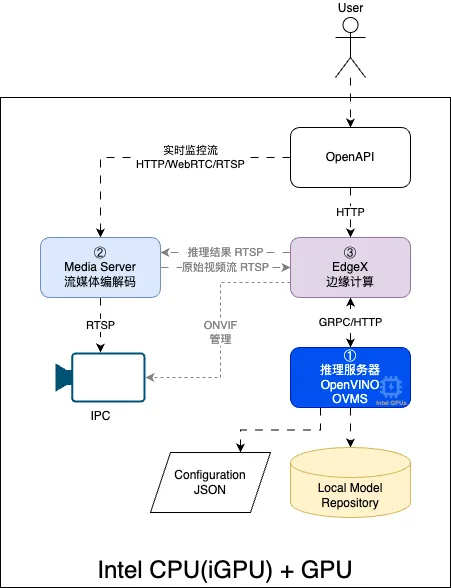

架构设计图

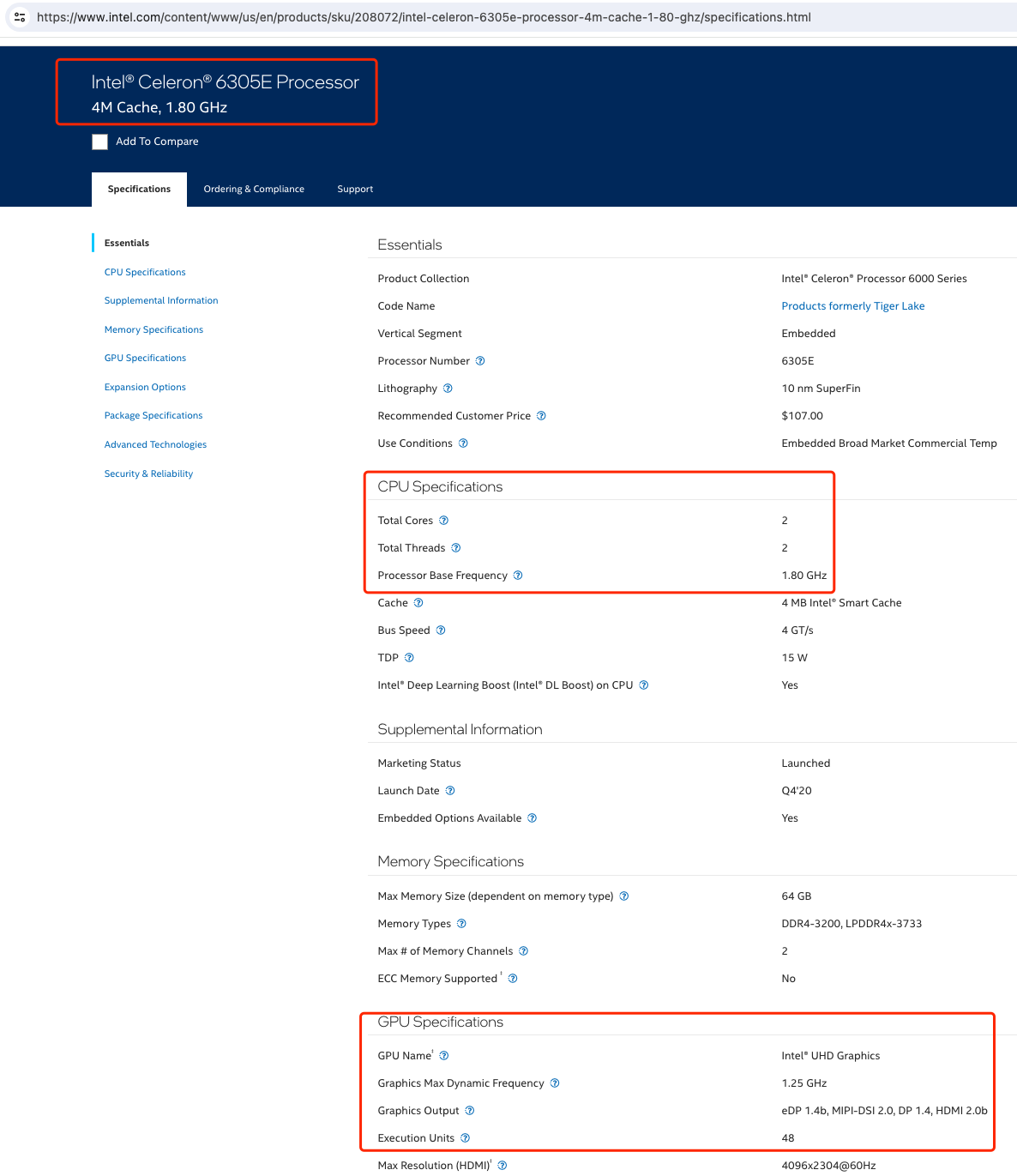

本系统设计硬件设计采用 Intel® CPU(iGPU) + GPU[可选] ,CPU 必须为 Intel® 架构,独立 GPU 可根据实际需要灵活扩展。

本系统全部采用 Docker 微服务运行,描述如下:

图例中,① 作为 AI 推理服务器,运行 OpenVINO™ Model Server 容器;

图例中,② 作为流媒体服务器,负责流媒体的编解码,实时查看视频流,运行亿琪软件产品 YiMEDIA,同样也采用容器运行;

图例中,③ 作为边缘计算,运行 EdgeXFoundry 容器,负责整个系统的协调和业务驱动;

应用配置

硬件环境

软件环境

采用 Ubuntu 22.04 作为 Docker 宿主主机,并且已经成功完成 Docker 运行环境的安装和测试。

<font size="3"># uname -a

Linux YiFUSION-N100 6.5.0-21-generic #21~22.04.1-Ubuntu SMP PREEMPT_DYNAMIC Fri Feb 9 13:32:52 UTC 2 x86_64 x86_64 x86_64 GNU/Linux

# l**_release -a

Distributor ID: Ubuntu

Description: Ubuntu 22.04.3 LTS

Release: 22.04

Codename: jammy</font>

采用 CPU 内置 iGPU 作为推理

<font size="3"># ll /dev/dri/

total 0

drwxr-xr-x 3 root root 100 2月 23 17:34 ./

drwxr-xr-x 20 root root 4620 2月 23 20:19 ../

crw-rw----+ 1 root video 226, 0 2月 23 20:58 card0

crw-rw----+ 1 root render 226, 128 2月 23 17:39 renderD128</font>

OpenVINO Model Server 服务

模型库配置:

name: 模型名称

base_path: 模型文件路径

target_device: 目标推理设备

layout: 需要的图片格式(可选)

<font size="3"># more models/config.json

{

"model_config_list":[

{

"config":{

"name":"ssdlite_mobilenet_v2",

"base_path":"/models/ssdlite_mobilenet_v2",

"target_device": "GPU"

}

},

]

}</font> 既可以用云存储作为模型库存放位置,也可以使用本地磁盘作为模型库存放位置(本例子使用)。

一级目录是对应的模型名称

二级目录是模型的版本,比如:1,2...

xml 是 OpenVINO™ 模型库相关配置,bin 是 OpenVINO™ 模型 IR 文件

models 目录结构如下:

model****r/>├── ssdlite_mobilenet_v2

│ └── 1

│ ├── coco_91cl_bkgr.txt

│ ├── ssdlite_mobilenet_v2.bin

│ ├── ssdlite_mobilenet_v2.mapping

│ └── ssdlite_mobilenet_v2.xml

启动 Model Server 容器

<font size="3">docker run -d \

--name model_server \

--network edgex_edgex-network \

--device /dev/dri \

-v $(pwd)/models:/models \

-p 9000:9000 \

-p 8000:8000 \

openvino/model_server:latest-gpu \

--config_path /models/config.json \

--port 9000 \

--rest_port 8000 \

--log_level DEBUG</font> 通过 Kserve RESTful API 访问 OVMS 工作状态,这里访问已经启动的模型:ssdlite_mobilenet_v2。

<font size="3">curl http://192.168.198.8:8000/v1/config

{

"ssdlite_mobilenet_v2": {

"model_version_status": [

{

"version": "1",

"state": "AVAILABLE",

"status": {

"error_code": "OK",

"error_message": "OK"

}

}

]

}

}</font> 同样通过 Kserve RESTful API 可以访问模型的详细输入和输出参数,主要是 inputs 和 outputs 里面的 dim。

inputs: 需要输入图片名称是 image_tensor,格式是 DT_INT8,可以用 byte。图片纬度是 [1,300,300,3], width:300, height:300, color:3;

outputs: 输出格式是一个四维矩阵 [1,1,100,7],只需要后面两个维度:rows:100, cols:7;

<font size="3">{

"modelSpec": {

"name": "ssdlite_mobilenet_v2",

"signatureName": "",

"version": "1"

},

"metadata": {

"signature_def": {

"@type": "type.googleapis.com/tensorflow.serving.SignatureDefMap",

"signatureDef": {

"serving_default": {

"inputs": {

"image_tensor": {

"dtype": "DT_UINT8",

"tensorShape": {

"dim": [

{

"size": "1",

"name": ""

},

{

"size": "300",

"name": ""

},

{

"size": "300",

"name": ""

},

{

"size": "3",

"name": ""

}

],

"unknownRank": false

},

"name": "image_tensor"

}

},

"outputs": {

"detection_boxes": {

"dtype": "DT_FLOAT",

"tensorShape": {

"dim": [

{

"size": "1",

"name": ""

},

{

"size": "1",

"name": ""

},

{

"size": "100",

"name": ""

},

{

"size": "7",

"name": ""

}

],

"unknownRank": false

},

"name": "detection_boxes"

}

},

"methodName": "",

"defaults": {

}

}

}

}

}

}</font> 笔者采用亿琪软件公司自己的流媒体服务器软件:YiMEDIA,来实现视频流的编解码。

当然,用户也可以使用一些其他框架来支撑,比如:

SRS(Simple Realtime Server)

go2rtc

MediaMTX (formerly rtsp-simple-server)

EdgeX Foundry 服务

根据 EdgeXFoundry edgex-compose 仓库手册,创建自己的 EdgeX 运行环境。本测试案例,只需要核心服务和 mqtt-broker 服务即可。

Device Service for ONVIF Camera 是否需要使用,取决于是否由 EdgeX 来管理网络摄像头(IPC),本例中暂时未启用此服务,采用手动设置。

这是一个系列微服务,包含了各种算法库,支持算法调用和推理。

SSDLITE_MOBILENET_V2:提交一张图片,返回目标识别结果;

deviceList:

- name: OpenVINO-Object-Detection

profileName: OpenVINO-Device

description: Example of OpenVINO Device

labels: [AI]

protocols:

ovms:

Host: 192.168.198.8

Port: 9000

Model: ssdlite_mobilenet_v2

Version: 1

Uri: rtsp://192.168.123.12:18554/test

Snapshot: false

Record: false

Score: 0.3

这是一个设备服务基础配置例子,用户可根据实际业务需求配置:

Host: OVMS 服务地址

Port: OVMS 服务端口

Model: 模型名称

Version: 模型版本

Uri: IPC 摄像头流地址

Snapshot: 是否建立快照

Record: 是否记录推理视频

Score: 推理结果最低信任度

以下是完整的 EdgeX metadata:

{

"apiVersion": "v3",

"statusCode": 200,

"totalCount": 1,

"devices": [

{

"created": 1708691437289,

"modified": 1708693207326,

"id": "6b02c540-eeae-4b61-b741-cdefcf93fd09",

"name": "OpenVINO-Object-Detection",

"description": "Example of OpenVINO Device",

"adminState": "UNLOCKED",

"operatingState": "UP",

"labels": ["AI"],

"serviceName": "device-openvino-ssdlite-mobilenet-v2",

"profileName": "OpenVINO-Device",

"autoEvents": [{}],

"protocols": {

"ovms": {

"Host": "192.168.198.8",

"Model": "ssdlite_mobilenet_v2",

"Port": 9000,

"Record": false,

"Snapshot": false,

"Uri": "rtsp://192.168.123.12:18554/test",

"Version": 1,

"Score": 0.3

}

}

}

]

}

验证配置

所有的容器都运行成功后,可看到类似以下的结果:

# docker ps --format 'table {{.Image}}\t{{.Names}}'

IMAGE NAMES

①

openvino/model_server:latest-gpu model_server

②

yiqisoft/YiMEDIA yimedia

③

edgexfoundry/device-openvino-ssdlite-mobilenet-v2:0.0.0-dev edgex-device-openvino-resnet

edgexfoundry/app-service-configurable:3.1.0 edgex-app-rules-engine

edgexfoundry/core-data:3.1.0 edgex-core-data

edgexfoundry/core-command:3.1.0 edgex-core-command

edgexfoundry/support-scheduler:3.1.0 edgex-support-scheduler

edgexfoundry/core-common-config-bootstrapper:3.1.0 edgex-core-common-config-bootstrapper

edgexfoundry/support-notifications:3.1.0 edgex-support-notifications

edgexfoundry/core-metadata:3.1.0 edgex-core-metadata

eclipse-mosquitto:2.0.18 edgex-mqtt-broker

edgexfoundry/edgex-ui:3.1.0 edgex-ui-go

redis:7.0.14-alpine edgex-redis

hashicorp/consul:1.16.2 edgex-core-consul

OpenVINO Model Server 日志

# docker logs -f model_server

model_server | [2024-04-11 04:29:29.941][131][serving][debug][kfs_grpc_inference_service.cpp:251] Processing gRPC request for model: ssdlite_mobilenet_v2; version: 1

model_server | [2024-04-11 04:29:29.941][131][serving][debug][kfs_grpc_inference_service.cpp:290] ModelInfer requested name: ssdlite_mobilenet_v2, version: 1

model_server | [2024-04-11 04:29:29.941][131][serving][debug][modelmanager.cpp:1519] Requesting model: ssdlite_mobilenet_v2; version: 1.

model_server | [2024-04-11 04:29:29.941][131][serving][debug][modelinstance.cpp:1054] Model: ssdlite_mobilenet_v2, version: 1 already loaded

model_server | [2024-04-11 04:29:29.941][131][serving][debug][predict_request_validation_utils.cpp:1035] [servable name: ssdlite_mobilenet_v2 version: 1] Validating request containing binary image input: name: image_tensor

model_server | [2024-04-11 04:29:29.941][131][serving][debug][modelinstance.cpp:1234] Getting infer req duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.022 ms

model_server | [2024-04-11 04:29:29.941][131][serving][debug][modelinstance.cpp:1242] Preprocessing duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.000 ms

model_server | [2024-04-11 04:29:29.941][131][serving][debug][deserialization.hpp:449] Request contains input in native file format: image_tensor

model_server | [2024-04-11 04:29:29.943][131][serving][debug][modelinstance.cpp:1252] Deserialization duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 2.492 ms

model_server | [2024-04-11 04:29:29.948][128][serving][debug][kfs_grpc_inference_service.cpp:251] Processing gRPC request for model: ssdlite_mobilenet_v2; version: 1

model_server | [2024-04-11 04:29:29.948][128][serving][debug][kfs_grpc_inference_service.cpp:290] ModelInfer requested name: ssdlite_mobilenet_v2, version: 1

model_server | [2024-04-11 04:29:29.948][128][serving][debug][modelmanager.cpp:1519] Requesting model: ssdlite_mobilenet_v2; version: 1.

model_server | [2024-04-11 04:29:29.948][128][serving][debug][modelinstance.cpp:1054] Model: ssdlite_mobilenet_v2, version: 1 already loaded

model_server | [2024-04-11 04:29:29.948][128][serving][debug][predict_request_validation_utils.cpp:1035] [servable name: ssdlite_mobilenet_v2 version: 1] Validating request containing binary image input: name: image_tensor

model_server | [2024-04-11 04:29:29.951][131][serving][debug][modelinstance.cpp:1260] Prediction duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 7.547 ms

model_server | [2024-04-11 04:29:29.951][131][serving][debug][modelinstance.cpp:1269] Serialization duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.226 ms

model_server | [2024-04-11 04:29:29.951][131][serving][debug][modelinstance.cpp:1277] Postprocessing duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.000 ms

model_server | [2024-04-11 04:29:29.951][131][serving][debug][kfs_grpc_inference_service.cpp:271] Total gRPC request processing time: 10.4070 ms

model_server | [2024-04-11 04:29:29.951][128][serving][debug][modelinstance.cpp:1234] Getting infer req duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 2.850 ms

model_server | [2024-04-11 04:29:29.951][128][serving][debug][modelinstance.cpp:1242] Preprocessing duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.000 ms

model_server | [2024-04-11 04:29:29.951][128][serving][debug][deserialization.hpp:449] Request contains input in native file format: image_tensor

model_server | [2024-04-11 04:29:29.953][128][serving][debug][modelinstance.cpp:1252] Deserialization duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 2.192 ms

model_server | [2024-04-11 04:29:29.961][128][serving][debug][modelinstance.cpp:1260] Prediction duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 7.519 ms

model_server | [2024-04-11 04:29:29.961][128][serving][debug][modelinstance.cpp:1269] Serialization duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.052 ms

model_server | [2024-04-11 04:29:29.961][128][serving][debug][modelinstance.cpp:1277] Postprocessing duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.000 ms

model_server | [2024-04-11 04:29:29.961][128][serving][debug][kfs_grpc_inference_service.cpp:271] Total gRPC request processing time: 12.787 ms

model_server | [2024-04-11 04:29:29.973][132][serving][debug][kfs_grpc_inference_service.cpp:251] Processing gRPC request for model: ssdlite_mobilenet_v2; version: 1

model_server | [2024-04-11 04:29:29.973][132][serving][debug][kfs_grpc_inference_service.cpp:290] ModelInfer requested name: ssdlite_mobilenet_v2, version: 1

model_server | [2024-04-11 04:29:29.973][132][serving][debug][modelmanager.cpp:1519] Requesting model: ssdlite_mobilenet_v2; version: 1.

model_server | [2024-04-11 04:29:29.973][132][serving][debug][modelinstance.cpp:1054] Model: ssdlite_mobilenet_v2, version: 1 already loaded

model_server | [2024-04-11 04:29:29.973][132][serving][debug][predict_request_validation_utils.cpp:1035] [servable name: ssdlite_mobilenet_v2 version: 1] Validating request containing binary image input: name: image_tensor

model_server | [2024-04-11 04:29:29.973][132][serving][debug][modelinstance.cpp:1234] Getting infer req duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.004 ms

model_server | [2024-04-11 04:29:29.973][132][serving][debug][modelinstance.cpp:1242] Preprocessing duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.000 ms

model_server | [2024-04-11 04:29:29.973][132][serving][debug][deserialization.hpp:449] Request contains input in native file format: image_tensor

model_server | [2024-04-11 04:29:29.976][132][serving][debug][modelinstance.cpp:1252] Deserialization duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 2.705 ms

model_server | [2024-04-11 04:29:29.983][132][serving][debug][modelinstance.cpp:1260] Prediction duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 7.461 ms

model_server | [2024-04-11 04:29:29.983][132][serving][debug][modelinstance.cpp:1269] Serialization duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.049 ms

model_server | [2024-04-11 04:29:29.983][132][serving][debug][modelinstance.cpp:1277] Postprocessing duration in model ssdlite_mobilenet_v2, version 1, nireq 0: 0.000 ms

model_server | [2024-04-11 04:29:29.983][132][serving][debug][kfs_grpc_inference_service.cpp:271] Total gRPC request processing time: 10.4050 ms

可以看到推理使用设备:Used device: CPU,也可以在 config.json 配置文件中修改为: AUTO 或 GPU

整个请求所花的时间:Total gRPC request processing time: 10.4050 ms,综合平均值,也就是10-15ms 之间,性能非常好。

推理结果

通过 Web MJPEG 方式实时查看推理结果。